Predictive Maintenance: A Proactive Approach

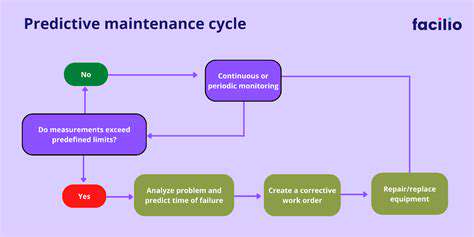

Predictive maintenance (PdM) is a proactive approach to equipment maintenance that uses advanced analytics and data to predict potential equipment failures before they occur. This contrasts sharply with reactive maintenance, which addresses failures only after they happen. PdM allows for a shift in focus from simply fixing problems to preventing them altogether, leading to significant cost savings and increased operational efficiency. By leveraging data from various sources, such as sensors, operational logs, and historical maintenance records, PdM systems can identify patterns and anomalies that indicate impending equipment failures.

PdM helps businesses anticipate potential issues, allowing them to schedule maintenance activities at optimal times, minimizing downtime and maximizing operational uptime. This approach often results in a more reliable and efficient operation because the focus is on preventing problems before they impact production. Identifying potential issues early enables businesses to implement preventive measures, which ultimately translates to lower maintenance costs and fewer disruptions in the production process.

Key Benefits of Predictive Maintenance

The primary benefits of implementing PdM strategies are numerous and impactful. Reduced downtime is a significant advantage, as PdM enables businesses to schedule maintenance activities during periods of low operational demand. This proactive approach minimizes the impact on production schedules and overall productivity. This often results in a dramatic decrease in unexpected equipment failures and associated disruptions.

Improved equipment reliability and lifespan are also significant benefits. By identifying and addressing potential issues before they escalate, PdM helps extend the lifespan of equipment and reduce the frequency of unexpected breakdowns. This, in turn, leads to significant cost savings over the long term. Furthermore, the proactive nature of PdM can contribute to a more efficient use of resources, including personnel and materials.

Data Analytics in Predictive Maintenance

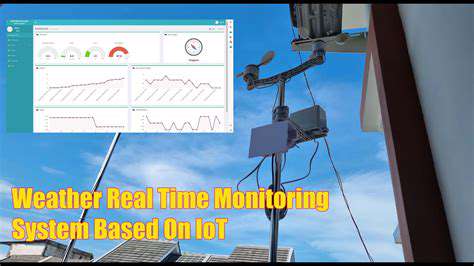

A crucial element of PdM is the effective use of data analytics. This involves collecting, analyzing, and interpreting data from various sources, such as sensor readings, operational logs, and historical maintenance records. Sophisticated algorithms and machine learning techniques are employed to identify patterns and anomalies that indicate potential failures. The power of data analysis in PdM lies in its ability to uncover hidden trends and predict future issues, enabling businesses to take preventive action. This approach allows for more timely and targeted maintenance interventions, minimizing downtime and maximizing operational efficiency.

Implementation and Challenges of Predictive Maintenance

Implementing a PdM system requires careful planning and execution. Businesses need to establish data collection strategies, implement appropriate analytical tools, and train personnel to effectively utilize the system. This often involves significant upfront investment in technology and training, which can be a challenge for some organizations. Data quality is also a critical consideration in PdM; accurate and reliable data is essential to ensure the accuracy of predictions. Moreover, integrating PdM into existing maintenance processes can sometimes be complex, requiring changes in workflow and personnel training.

Enhanced Quality Control through Real-Time Monitoring

Implementing Robust Quality Checks with R

R, a powerful programming language, offers a comprehensive suite of tools for implementing robust quality control procedures. These tools can be leveraged to automate data validation, identify outliers, and ensure data integrity at various stages of a project. By automating these checks, R streamlines the process and minimizes the risk of errors propagating through the system. The advantages extend beyond simple validation; R allows for the development of sophisticated statistical models to assess the quality of data and identify patterns that might otherwise go unnoticed.

Implementing quality control measures using R typically involves writing custom functions and leveraging existing packages. These functions can be tailored to specific data types and quality standards, ensuring that the checks are highly targeted and efficient. This precision can significantly reduce the time spent on manual data inspection and enhance the overall productivity of the quality control process. Effective data quality control is a critical component of any successful project, and R offers a powerful, flexible solution for achieving this.

Utilizing R Packages for Enhanced Analysis

A significant advantage of using R for quality control lies in its extensive ecosystem of packages. These specialized packages often contain pre-built functions and algorithms that are specifically designed for data analysis and validation. For instance, packages like 'dplyr' and 'tidyr' facilitate data manipulation and cleaning, while packages like 'ggplot2' provide tools for visualizing data and identifying potential anomalies. This streamlined approach accelerates the process of quality control, allowing for quicker identification of issues and faster remediation.

Automated Data Validation with R Scripts

R scripts can be designed to automate the entire data validation process. This automation significantly reduces the risk of human error and ensures consistency across different datasets. The scripts can be programmed to perform checks for missing values, unusual data distributions, and inconsistencies in data formats, all while providing detailed reports for easy interpretation. This automated approach not only boosts efficiency but also ensures that data quality standards are consistently upheld.

Furthermore, the scripting capability allows for the creation of dynamic checks that adapt to the changing nature of the data. As the dataset evolves, the scripts can be updated to incorporate new validation rules, ensuring that the quality control measures remain relevant and effective throughout the project lifecycle. This adaptability is a significant advantage over static validation methods.

Advanced Statistical Methods for Quality Assessment

Beyond basic validation, R enables the application of advanced statistical methods for a comprehensive assessment of data quality. Techniques like statistical process control (SPC) can be implemented to monitor data trends and identify potential deviations from expected patterns. This proactive approach to quality control can help to anticipate potential issues before they impact the final product or outcome. R provides the tools to not just identify problems but also to understand the underlying causes of quality variations.

R’s ability to perform regression analysis and other sophisticated statistical tests allows for a deeper understanding of relationships within the data and helps pinpoint factors influencing data quality. This level of analysis, often impossible with manual methods, provides invaluable insights for improving overall data quality and preventing future issues. By leveraging advanced statistical methods, organizations can gain a more comprehensive understanding of the factors affecting data quality and take proactive measures to improve it.